- Steps for installing and configuring a Kubernetes cluster.

- Deployment strategies like rolling updates and blue-green deployments.

- Managing resources, including scaling applications.

Most software companies are looking for proven strategies to effortlessly build, manage, and scale containerized applications to drive seamless customer experience. Kubernetes is an application orchestrator that orchestrates containerized cloud-native microservice applications to easily deploy and manage the applications and improve reliability with less time engineers spending on DevOps automation.

Discover how enterprises are facing challenges to embrace the public cloud for critical modern applications:

- Increases the human cost of running services

- Increases the complexity of running services

- Setting up services manually

- Increase the size of bills from public cloud providers

- Manual work of fixing if a node crashes

To overcome the challenges mentioned above, an orchestrator comes in handy. It helps in operations such as automation and scaling the process.

Before we deep dive into detail about Kubernetes, let’s understand the orchestrator.

What is an Orchestrator?

An orchestrator is a system or tool that deploys and manages applications that can be deployed dynamically and respond to the changes made by the developers. As a result, your business can leverage benefits such as deploying your application, scale up and down dynamically based on demand. Moreover, an orchestrator also helps in self-healing during a breakdown, zero downtime rolling updates and roll-backs, and many more.

- To understand Kubernetes, we need to have basic knowledge about where Kubernetes are effective.

Containerized Application – App that runs in the container - Cloud-native app – The application that can satisfy business demand such as self-healing and rolling updates.

- Microservices app – Application built from a small program or part that forms an application.

Orchestrator helps us manage and organize multiple containers so that all containers are healthy and distributed in a clustered environment. There are mainly two well know orchestrators: Kubernetes & Docker Swarm.x

Kubernetes Tutorial: Kubernetes vs. Docker Swarm

Kubernetes and Docker Swarm are the most used containers orchestration tools in today’s market. But over the years, Kubernetes are a clear winner in this race with the largest market shares.

Kubernetes, when compared with Docker Swarm, owns a great active community and impacts many high-scaling organizations. On the other hand, Swarm is easy to set up, but it is limited to its APIs.

What is Kubernetes?

Kubernetes is an immutable, operable, open-source platform for managing containerized workloads and services that facilitates declarative configuration and automation. It has a large, rapidly growing ecosystem.

Aspects of Kubernetes:

At the highest level, Kubernetes includes two things:

- Cluster for running applications.

- Orchestrator for cloud-native microservices apps

Kubernetes as Cluster:

Unlike any other cluster, Kube is a combination of a set of nodes and a control panel. Where the control panel showcases the API’s scheduler for the tasks that are to be assigned to nodes and states. Nodes are the points Where application services are running.

Kubernetes as Orchestrator:

As an orchestrator, Kube takes care of deploying and management of the applications. Kube organizes everything into an app and makes sure everything is running smoothly.

Kubernetes as an orchestrator offers features such as:

- Automated Scheduling

- Kubernetes provides an advanced scheduler to launch a container on cluster nodes.

- Self Healing Capabilities

- Rescheduling, replacing, and restarting the dead containers.

- Automated rollouts and rollback

- Kubernetes supports rollouts and rollbacks for the desired state of the containerized application.

- Horizontal Scaling and Load Balancing

- Kubernetes can scale up and scale down the application as per the requirements.

Kubernetes Architecture:

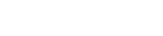

Kubernetes Architecture has the following main components:

- Master nodes

- Worker/Slave nodes

Linux hosts can be VM, bare metal, or any server in the public cloud.

Master (Control Panel)

Master is responsible for collecting the system services that make up the control of the cluster. In other words, it has entry points for all administrative tasks. A cluster can have more than one master node for fault tolerance.

The master node has various components such as

- API Server

- Controller Manager

- Scheduler and ETCD.

Nodes:

They are the workers of a Kubernetes cluster.

- Monitoring API server for a new work assignment.

- Execute new work assignment

- Report back to the control plane through API server

There are three major components of a node.

- Kubelet

- Container run time

- Kube-proxy

Pod:

The unit which is executable in Kube is called pod. The simplest model is to run a single container inside a single pod. So the Kube pod is something that can be built to run one or more containers. Pods have limited life span, they are created, and they die too. But in case they die unexpectedly, kube starts or brings a new one in its place.

How do pods operate if they have more than one container? One should be aware of the specific limitation of pods which are as follow:

- A single IP address

- Share localhost

- A shared IPC space

- A shared network port range

- Shared volumes

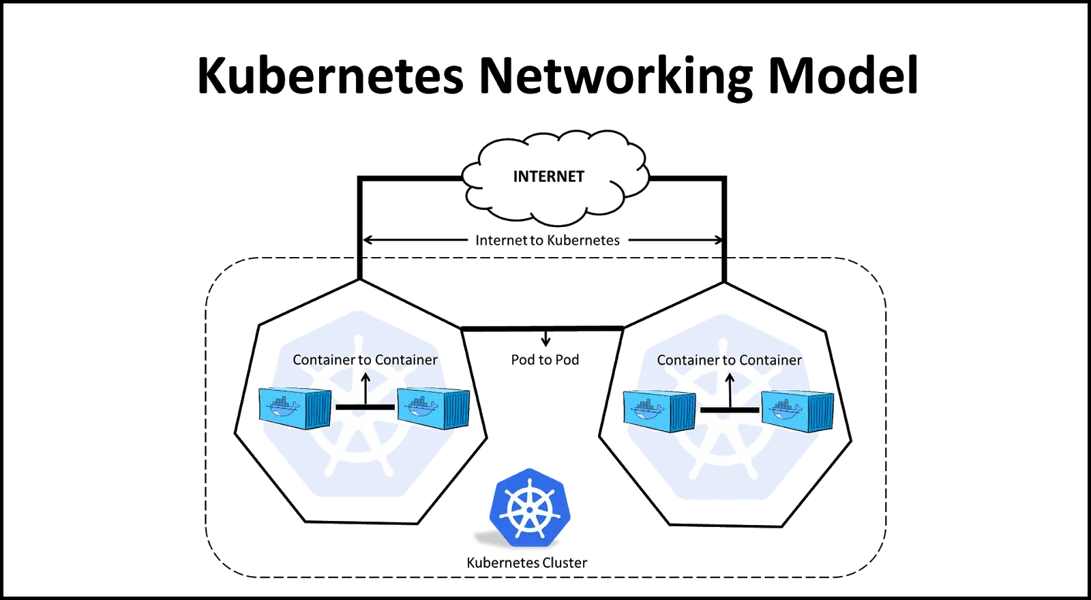

Kubernetes networking:

Following are the constraints for specific model types:

- Communication between the Pods cab happen without network address translation

- Without NAT, Nodes too can communicate with Pods.

- The IP of Pod looks for the same IP as other pods do the same for themselves.

There is absolute control over networking. Kubernetes has only a few networking sections. They are:

- Container to Container transfers

- Pod to pod transfers

- Pod to service transfers

- Internet to service transfers

Conclusion:

Anblicks’, the team can help in Kubernetes services deployment, monitor your app’s performance, and secure your application to accelerate your digital business growth. Also, It helps in cloud and infrastructure automation services to several industries such as logistics, manufacturing, automotive, healthcare, and retail, covering easy migration of the apps on the cloud to improve your ROI.

If you are planning to deploy Kubernetes today or want to learn more, please get in touch with us.