Optimize Business KPIs by Making Effective Actionable Decisions Using Causal ML

TL;DR

- Causal machine learning uncovers true cause-and-effect relationships affecting business KPIs.

- It enables data-driven decisions with more accurate insights.

- This approach offers deeper understanding of key KPI drivers.

Causal Analysis in Azure Machine Learning Studio to answer your Causal questions through an end-to-end automated framework.

In this blog post, we will look at different scenarios where the most used ML modeling techniques may misinterpret the real relationships in the data. Here we try to shift this paradigm to find actionable insights beyond spurious correlations based on estimating causal relationships and measuring the treatment effectiveness of Target KPI outcomes.

Motivation for Causal ML

If we were given historic or observational data with 5% churned customers for a product in the last year, every business owner’s goal is to decrease this percentage by conducting a targeted campaign. We usually build a predictive classical Propensity Model of churn customers (Propensity score – Probability of churn given its covariates of customer behavior such as CLV, RFM, etc.) and prescribe discounts or upsell/cross-sell to customers by selecting thresholds.

Now, the Churn Manager wants to know the Campaign’s effectiveness; are my customers retained due to promoted offers or marketing campaigns, or is it the other way around? This requires traditional AB testing gold standard experimentation, which takes time, and is also not viable and expensive in certain situations.

We need to think beyond propensity modeling. Supervised churn predictions are useful but not every time since it lacks recommending the next best action in what-if scenarios. This problem of targeting personalized customers who can respond to your marketing offers positively (persuadable) without wasting money on lost cases, as to take the next best action/intervene and change the future outcomes such as maximizing the retention rate is uplift modeling in Causal Inference.

In understanding certain counterfactual questions in the consumer world, like how people’s behaviors will change if I raise or lower retail prices (what’s the effect of prices on behavior pattern), if I show an Advertisement to the customer, will they buy the product or not (effect of advertisement on purchase), which includes data-driven decisions through causal modeling.

Usually, forecasting or prediction problems look at how many people will subscribe for the next month, and Causal Problems are what would happen if some policy changes (i.e., how many more people subscribe if we run a campaign).

The Causal analysis goes one step further; it aims to infer aspects of the data generation process. With the help of such facets, one can deduce not only the likelihood of events under static conditions but also the dynamics of events under changing conditions. This capability includes predicting the effect of actions (e.g., treatments or policy decisions), identifying causes of reported events, and assessing responsibility and attribution (e.g., whether event x was necessary (or sufficient) for the occurrence of event y).

When we use prediction models of spurious correlational patterns using supervised ML, we’re implicitly assuming that things will continue as they have in the past. But at the same time, because of the decisions we’re making or actions we take based on our predicted results, we’re actively changing the environment in a way that often breaks those patterns.

From Predictions to Decision-Making

For Decision making, we need to find features that both cause the outcome and estimate how that outcome would change if features were changed. Many Data science questions are causal questions, and estimating a counterfactual is common in decision-making scenarios.

A/B Experiments: If I change the color of a button on the site, will it lead to higher engagement?

Policy Decisions: If we adopt this treatment/policy, how will it lead to change in outcomes? Will it lead to a healthier patient/more revenue etc.?

Policy Evaluation: Changes we have made in the past or knowing what I know now, and the way outcomes changed, did my policy help or hurt what I was trying to change?

Credit Attribution: Are people buying because of ad exposure? Would they have bought it anyway?

What Is Causation and Causal Effect?

If an action or treatment(T) causes an outcome(Y), if and only if that action(T) leads to a change in the outcome(Y), keeping everything else constant. Causation implies that by varying one factor, I can make another vary. (Cook & Campbell 1979: 36, emphasis in original).

Example: If Aspirin causes relief to my headache, if and only if that Aspirin leads to a change in headache.

If Marketing causes an increase in sales, if and only if that Campaign leads to a change in sales, keeping everything else constant.

The causal effect is the magnitude by which Y is changed by the unit change in T and not the other way around:

Causal effect = E [Y | do(T=1)] – E [Y | do (T = 0)] (Judea Pearl’s Do-Calculus)

Causal Inference requires domain knowledge, assumptions, and expertise. The Microsoft ALICE Research team has developed DoWhy and EconML open-source libraries to make our life easier. The first step in any causal analysis is posing a clear question:

- What treatment/action am I interested in?

- What outcome do I want to consider?

- What confounders might be correlated with both my outcome and my treatment?

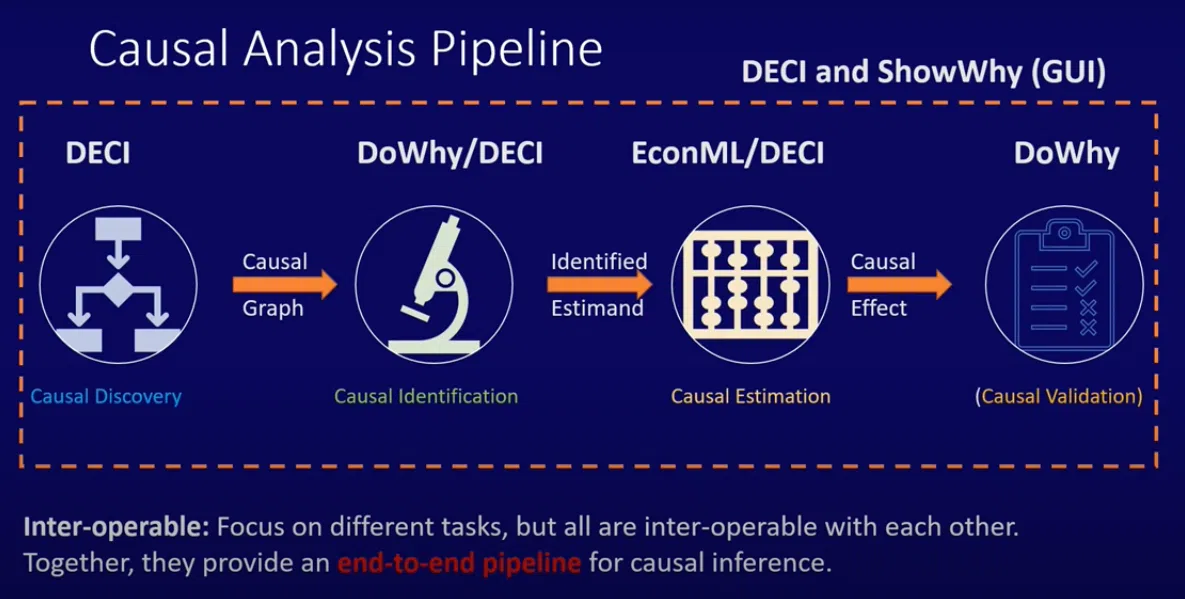

Causal Analysis pipeline: Deep Learning based End-End Causal Inference (DECI) (Microsoft patent).

Causal Discovery – Causal Identification – Causal Estimation – Causal Validation

Responsible AI Dashboard in (Azure Machine Learning Studio): Causal Analysis

This feature is based on interpreting fitted models from Model Registry and can explore what might have been the case if there was a Causal understanding of the same variables. We can look at the causal effects of different features and compare them with heterogeneous effects, and we can look at different cohorts and what features or policies would work best for them.

- DECI provides a framework for end-end causal Inference, which can also be used for Discovery or Estimation alone.

- EconML provides multiple causation Estimation methods.

- DoWhy provides multiple Identification and Validation methods.

- ShowWhy provides a no-code End–to–end causal analysis in a user-friendly GUI for causal decision-making.

Summary

Modern Machine Learning and Deep learning algorithms can find complex patterns among the data that explain black box algorithms; their interpretations can mean what that machine learning algorithm has learned from the world.

When we apply these learned Machine Learning algorithms to society in making policy decisions like loan approvals and health insurance policies, it might be that what it learned about the world is not necessarily a good representation of what is happening in the world.

Data-driven predictive models are, however, transparent but not truly interpretable. Interpretability requires a Causal model (demonstrated by table two fallacy). Causal models reliably represent some processes in the world. Interpretable/Explainable AI should be capable of reasoning to make effective decisions without bias.

Source: DZone

Hari Hara Bhagavathula is an Applied Machine Learning Researcher at Anblicks and has been in data science profession since 2016. Providing solutions on Marketing and Finance Data science applications with decision making under uncertainty. My research interests are in social science and econometrics problems, conducting Experimentations with Simulations and responsible automatic decision-making systems.