Implementing Event-Driven Autoscaling with Amazon SQS and KEDA

TL;DR

- Scaling is based on queue depth instead of CPU metrics.

- KEDA automatically adjusts Kubernetes worker pods based on demand.

- Scale-to-zero reduces idle infrastructure costs.

Modern cloud applications rarely operate at a steady pace. Traffic spikes arrive without warning, background jobs accumulate unpredictably, and scaling infrastructure purely on CPU or memory often produces the wrong outcome—either wasted resources or delayed processing. This problem becomes especially clear in event-driven systems. When workloads are driven by queues rather than synchronous requests, CPU usage is a poor indicator of actual demand. A service may appear idle while thousands of messages wait to be processed. Traditional autoscaling assumes that infrastructure metrics reflect workload pressure. In practice, this leads to late scaling, overprovisioning, or services running idle “just in case.” Event-driven autoscaling makes scaling decisions based on workload signals rather than resource consumption. Instead of watching CPU graphs, the system reacts directly to the number of messages waiting in the queue.

What Makes Event-Driven Scaling Different

Horizontal Pod Autoscaling works well for synchronous web services. CPU increases as traffic increases, so scaling decisions make sense. Queue-driven workloads behave differently.

Consider a worker consuming messages from Amazon SQS. With a single message in the queue, CPU usage stays low. The autoscaler sees no pressure. Meanwhile, thousands of messages may be waiting behind that first one. By the time CPU spikes and scaling begins, the backlog has already grown out of control.

Event-driven autoscaling reverses this logic. Instead of inferring demand indirectly, it observes the event source directly. If messages exist, there is work to do. If the queue is empty, there is not. This approach aligns scaling behaviour with reality.

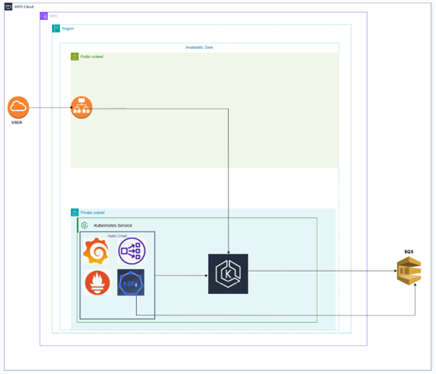

Architecture Overview

The architecture is intentionally simple but representative of real-world asynchronous systems. The system is made up of four core components:

- A simple web interface for submitting messages

- An Amazon SQS queue that decouples ingestion from processing

- Kubernetes worker pods that process messages

- KEDA, which monitors the queue and adjusts scaling accordingly

When a user submits a request, a message is placed into SQS. The request is acknowledged immediately, which is good for the user experience as it stays responsive. Worker pods run inside a Kubernetes cluster and continuously poll the queue. These workers do not scale based on CPU or memory usage. Their replica count is driven entirely by queue depth.

KEDA is the layer that integrates SQS and Kubernetes. It keeps an eye on queue metrics and changes the number of worker pods on the fly. This clear separation of components makes the system stable, resilient, and user-friendly. Combination of Prometheus and Grafana was utilised for monitoring purposes.

How KEDA Enables Event-Driven Autoscaling

KEDA extends Kubernetes capabilities by allowing it to scale workloads based on the requirements of external systems such as queues, streams, or databases. Scaling behaviour is described by a ScaledObject that links a Kubernetes deployment with an event source. KEDA regularly queries the event source (Amazon SQS) and determines how many replicas are required to process the backlog efficiently.

The logic is simple and transparent. When the queue length exceeds a defined threshold, KEDA requests additional replicas. As the backlog drains, replicas are reduced accordingly. In the meantime, a deployment can be scaled back to zero if there is no activity, thus, no resources will be wasted on idling.

Example Configuration

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: <scaled-object-name>

spec:

scaleTargetRef:

name: <deployment-name>

minReplicaCount: 1

triggers:

– type: aws-sqs-queue

metadata:

queueURL: <aws-sqs-url>

queueLength: “20”

awsRegion: “<aws-region>”

authenticationRef:

name: keda-trigger-auth-aws-credentials

In this setup, KEDA is instructed to scale the worker deployment when there are more than twenty messages waiting in the queue. Authentication is done by using AWS pod identity, which eliminates the need for static credentials and is in line with cloud-native security best practices.

The Importance of Scale-to-Zero

Most autoscaling mechanisms limit the minimum number of replicas to one. This single pod runs continuously, and thus resources are still consumed even when there is no work. KEDA allows scaling to zero. When there is no work in the queue, all worker pods are terminated. When a new message arrives, KEDA detects it and brings the deployment back online.

This behaviour is particularly valuable for workloads with natural idle periods—batch jobs, webhook processors, scheduled tasks, or background pipelines. While cold-start latency exists, it is often an acceptable trade-off for significantly reduced infrastructure costs.

Operational Considerations

Event-driven autoscaling is most effective when paired with well-designed workers. In this case, the workers must be idempotent since message redelivery may take place. Duplicate processing should not corrupt system state. It is also very important that the workers are able to shutdown gracefully. The workers should follow the instructions to stop consuming new messages, complete the in-flight work, and exit cleanly when Kubernetes scales down pods.

Observability closes the loop. Queue depth, replica count, processing latency, and error rates should be monitored together. Scaling is only beneficial if additional replicas result in higher throughput. If downstream bottlenecks such as databases, APIs, or rate limits – exist, additional replicas may not improve throughput.

When Event-Driven Autoscaling Is Not a Fit

Not all changes in scale can be achieved through event driven scaling. CPU based autoscaling would be a better option for synchronous APIs, which are typically concerned with readiness and low latency. Just like any other architectural pattern, event-driven autoscaling is the most effective when it’s used purposefully. Event-driven autoscaling alters the scalability concept for cloud-native systems. Services no longer react to infrastructure signals but instead, they respond directly to real workload demand.

Conclusion

Event-driven autoscaling fundamentally changes how scalability is handled in cloud-native systems. Services do not wait for infrastructure signals to react, instead, they respond directly to the real demand of the workload.

This project, by integrating Amazon SQS, Kubernetes, and KEDA, showcases a viable way of constructing asynchronous systems that can scale efficiently, remain cost-effective, and do not become unnecessarily complex. As distributed architectures become more complex, event-driven autoscaling is turning into a basic pattern rather than a sophisticated optimization. This project offers a realistic instance of that pattern being implemented in a practical, predictable way with measurable benefits

As a CloudOps Engineer, I focus on building and managing scalable cloud infrastructure. My role involves cloud deployments, infrastructure management, monitoring, and automation to ensure reliable and efficient cloud operations. I also work with DevOps practices and automation tools to support modern cloud-native applications and improve operational efficiency. Passionate about AI and data-driven technologies, I continually explore new ways to build scalable, innovative cloud solutions.