The landscape of AI development is rapidly shifting from cloud-only experiments to powerful local workflows. At the center of this shift lies Docker, a tool synonymous with containers, which is now evolving into a comprehensive platform for building and running AI agents directly on your machine without exposing code to online AI services. This move brings the familiar, reproducible Docker workflow to the world of intelligent applications.

A Unified Platform for AI Development

Docker is not just adding AI support; it is creating an integrated ecosystem. The vision is to make the journey from a local prototype to a fully deployed agentic application as seamless as it is for a traditional web application. The key components of the platform include:

- Docker Model Runner (DMR): This tool allows you to pull and run open-source AI models as agents directly from Docker Hub similar to container images. These models are served locally using OpenAI-compatible APIs for easy integration.

- Model Context Protocol (MCP) Integration: Docker has embraced MCP, which is an emerging standard that acts as a universal connector between AI agents and external tools. Through Docker’s curated MCP Catalog, users can access a suite of containerized tools, from GitHub to web browsers that agents can use safely without any dependency conflicts.

- Docker Compose for AI: With the recent updates, one can now define and run AI models and services together in a single, portable compose.yaml file, treating models as first-class citizens within the stack.

The Heart of Local Safety: Docker Sandboxes

The most critical innovation for local development is Docker Sandboxes, an experimental feature designed to give AI agents like Claude Code autonomy without risk. Running an AI agent with full system access can be dangerous, from accidental deletion of files to sophisticated prompt injection attacks.

A Docker Sandbox solves this problem by creating an isolated container that mirrors your local workspace. When you run docker sandbox run <AI-agent>, Docker mounts your current project directory into the container at the same file path. This means that:

Security: The agent operates in a secure environment. It can execute commands, install packages, and modify files, but all changes are confined to the sandbox. Your host machine remains completely protected.

Famiiliarity: The workspace path is identical inside and outside of the container; hence, the file paths in error matches, and scripts with hard-coded paths will work as expected.

Persistence: Docker enforces only one sandbox per workspace. State like installed packages or temporary files persist across sessions, which allows for iterative development without starting from scratch each time.

The Practical Workflow: From Local to Cloud

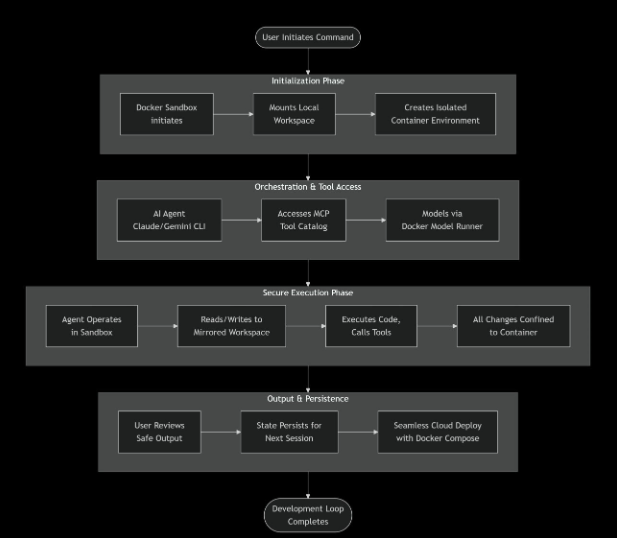

This flowchart illustrates the secure, and end-to-end process when you run an AI agent with Docker’s platform. Here’s what happens at each of the key stages:

- Initialization Phase – The process begins as you execute the command like `docker sandbox run`. Docker will create a secure, and isolated container that will mirror your local project directory. This sandbox is your agent’s entire world – it cannot escape nor affect your main system or the code base.

- Orchestration & Tool Access – Inside the sandbox, your chosen AI agent (such as Claude or Gemini CLI) will boot up. On top of it, the AI agent has controlled access to a powerful suite of tools via Docker’s integration with the Model Context Protocol (MCP) Catalog. It can also interact with GitHub or perform web searches using containerized tools. The agent can safely use these containerized tools. Also, the models served by the Docker Model Runner are available on local APIs, completing the integrated toolkit.

- Secure Execution Phase – This is the place where autonomous work happens. The agent will read your code, write new files to it, run commands given by you, and use the available MCP tools. Every single action is confined inside the walls of the container created. It will modify the mirrored workspace, leaving your host machine’s actual files untouched and safe unless and until specified.

- Output & Next Steps – You review the agent’s work – the code changes, created files, and logs – all generated within the risk-free environment. The sandbox state will persist, which will allow you to start a new session right where you left off. Finally, the entire environment, defined in a compose.yaml file, is portable. You can deploy the identical setup to the cloud, closing the loop from local experimentation to production deployment.

This flow will transform the developer’s role into a strategic supervisor, providing a safe and tool-rich playground for AI agents and enabling a professional, and reproducible workflow for building agentic applications.